Additional Menu

Anggota IKAPI

No. 054/SSL/2023

Template Buku (Unesco)

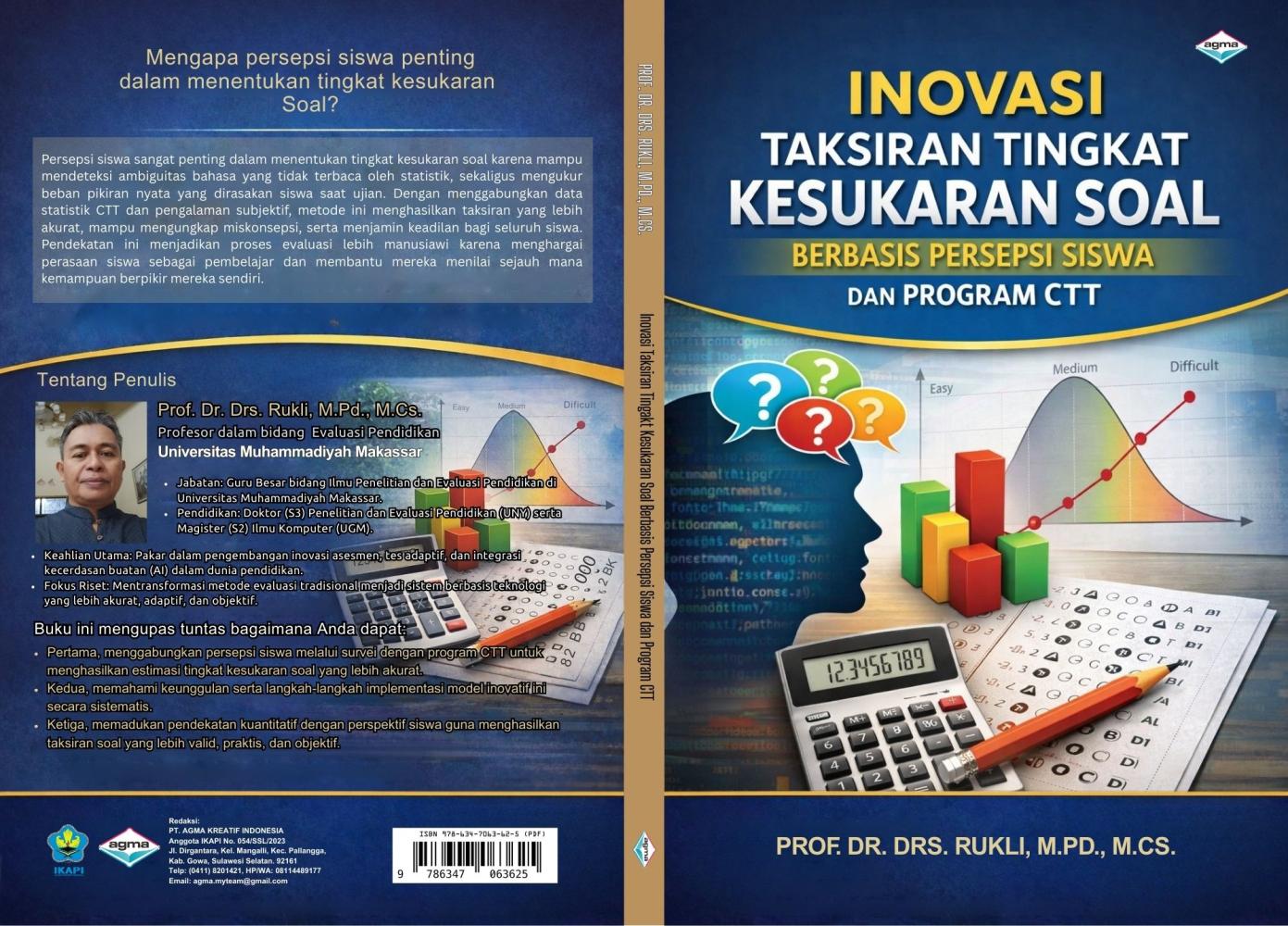

Buku berjudul INOVASI TAKSIRAN TINGKAT KESUKARAN SOAL BERBASIS PERSEPSI SISWA DAN PROGRAM CTT ini hadir untuk menjelaskan pentingnya melibatkan perspektif siswa dalam proses evaluasi guna mendeteksi ambiguitas soal yang tidak terjangkau oleh statistik konvensional. Di dalamnya, Anda akan mempelajari cara mengintegrasikan survei persepsi dengan program Classical Test Theory (CTT) untuk menghasilkan estimasi tingkat kesukaran yang lebih akurat, valid, dan manusiawi. Melalui panduan praktis dan implementasi model inovatif, buku ini menawarkan solusi bagi pendidik untuk memadukan pendekatan kuantitatif dengan realitas kognitif siswa, sehingga tercipta sistem penilaian yang lebih adil serta mampu mengungkap miskonsepsi belajar secara efektif.

Akgun, S., & Greenhow, C. (2021). Artificial intelligence in education: Promises and implications for assessment. Journal of Educational Computing Research, 59(3), 455–478. https://doi.org/10.1177/0735633121997891

Allen, M. J., & Yen, W. M. (2002). Introduction to measurement theory. Waveland Press.

American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. (2014). Standards for Educational and Psychological Testing (Interpreting item difficulty and discrimination). Washington, DC: AERA.

Aminah Husin, A. S. (2022). Case study of difficulty index and discrimination index item of Year 5 English language summative test (comprehension). Sains Humanika, 12(2), 45–59. https://doi.org/10.11113/sh.v12n2-2.1786

Andrade, H. (2019). A critical review of research on student self-assessment. Frontiers in Education, 4, 87. https://doi.org/10.3389/feduc.2019.00087

Anggraini, L. (2021). Understanding students’ problem-solving difficulties: A perception-based approach to item difficulty. Journal of Educational Evaluation Research.

Anggraini, S. (2021). Analisis kesulitan siswa dalam menyelesaikan soal pemecahan masalah. Universitas Negeri Malang.

Arifin, Z. (2017). Evaluasi pembelajaran. Direktorat Jenderal Pendidikan Islam, Kementerian Agama RI.

Assessment Systems Corporation. (2019). ITEMAN 4.0 user manual.

Astuti, S. (2020). Utilizing classical item analysis to enhance learning assessment. International Journal of Instruction, 13(2), 457–472.

Baghaei, P. (2011). The Rasch model as a construct validation tool. Rasch Measurement Transactions, 25(1), 1328–1336.

Baker, F. B., & Kim, S. H. (2017). The basics of item response theory (2nd ed.). Springer.

Becker, W., & Park, K. (2021). The future of assessment: Adaptive, automated, and analytics-driven systems. Assessment in Education: Principles, Policy & Practice, 28(4), 421–439.

Benedetto, L., Cappelli, A., Turrin, R., & Cremonesi, P. (2020). R2DE: A NLP approach to estimating IRT parameters of newly generated questions. arXiv. https://arxiv.org/abs/2001.07569

Berk, R. A. (2015). Interpreting item statistics: Difficulty (p) and discrimination (D). Practical Assessment, Research & Evaluation, 20(1), 1–10.

Birnbaum, A. (1968). Some latent trait models and their use in inferring an examinee’s ability. In F. M. Lord & M. Novick (Eds.), Statistical theories of mental test scores. Addison-Wesley.

Bond, T. G., & Fox, C. M. (2015). Applying the Rasch model: Fundamental measurement in the human sciences (3rd ed.). Routledge.

Botella, C., Sánchez-Meca, J., & Ramos, M. (2019). Literature review and bibliometric methods for mapping scientific fields. Scientometrics, 118(3), 1239–1265. https://doi.org/10.1007/s11192-019-03027-6

Briggs, D. C. (2017). Diagnostic assessment in education: A theory of action framework. Educational Measurement: Issues and Practice, 36(4), 6–18.

Brookhart, S. M. (2010). How to assess higher-order thinking skills in your classroom. ASCD.

Brookhart, S. M. (2017). How to create and use rubrics for formative assessment and grading. ASCD.

Brookhart, S. M. (2017). How to give effective feedback to your students. ASCD.

Brookhart, S. M., & Nitko, A. J. (2019). Educational assessment of students (8th ed.). Pearson.

Brown, G. T. L. (2022). The past, present and future of educational assessment. Frontiers in Education, 7, 106063.

Burr, S. A. (2023). A narrative review of adaptive testing and its application to education. Educational Measurement Review.

Cambridge Assessment. (2024). The futures of assessment: Navigating uncertainties through anticipatory thinking. Cambridge Assessment Whitepaper.

Cappelleri, J. C., et al. (2014). Overview of classical test theory and item response theory. Journal of Evaluation in Clinical Practice, 20(4), 281–288.

Cavalcanti, L., & Moita, G. (2022). Trends in educational assessment research: A bibliometric analysis from 2000 to 2021. Educational Research Review, 37, 100485.

Chakrabartty, S. N., Wang, K., & Chakrabarty, D. (2015). Uncertainty in test score data and classically defined reliability of tests and test batteries, using a new method for test dichotomisation. arXiv. https://arxiv.org/abs/1503.03297

Chamba, L. T., et al. (2024). Future-proofing quality education using integrated assessment approaches. Quality in Education: Theory & Practice.

Chanchalor, S., & Lim, H. Y. (2021). Students’ perception of item difficulty using semantic differential scales. Kasetsart Journal of Social Sciences, 42(4), 799–807.

Choudhury, S., & Chechi, V. K. (2023). Development and validation of semantic differential scale to assess teachers’ belief towards socially disadvantaged students. Journal of Higher Education Theory and Practice, 23(1), 185-202. https://doi.org/10.33423/jhetp.v23i1.5800

Cohen, R. J., Swerlik, M. E., & Sturman, E. D. (2018). Psychological testing and assessment. McGraw-Hill Education.

Conijn, R. (2023). The effects of explanations in automated essay scoring on students’ trust and motivation. Journal of Learning Analytics, 10(2), 45–62.

Coombe, C., Davidson, P., & O’Sullivan, B. (2020). The Cambridge guide to language assessment. Cambridge University Press.

Creswell, J. W., & Creswell, J. D. (2018). Research design: Qualitative, quantitative, and mixed methods approaches (5th ed.). SAGE Publications.

Crocker, L., & Algina, J. (2006). Introduction to classical and modern test theory. Cengage.

Crocker, L., & Algina, J. (2008). Introduction to classical and modern test theory. Cengage Learning.

Darling-Hammond, L., Wilhoit, G., & Pittenger, L. (2017). Accountability for college and career readiness: Developing a new paradigm. Education Policy Analysis Archives, 24(11), 1–34.

De Ayala, R. J. (2009). The theory and practice of item response theory. Guilford Press.

De Boeck, P., & Wilson, M. (2004). Explanatory item response models: A generalized linear and nonlinear approach. Springer.

DeMars, C. (2018). Classical test theory. Routledge.

DeMars, C. (2018). Item response theory. Oxford University Press.

Demir, H. (2025). A systematic review on Computerized Adaptive Testing (CAT): Trends and innovations. Journal of Computerized Assessment, 12(1), 1–19.

Dunn, T. J., Baguley, T., & Brunsden, V. (2014). From alpha to omega: A practical solution to the pervasive problem of internal consistency estimation. British Journal of Psychology, 105(3), 399–412.

Ebel, R. L., & Frisbie, D. A. (1991). Essentials of educational measurement (5th ed.). Prentice Hall.

Ebel, R. L., & Frisbie, D. A. (2009). Essentials of educational measurement. Prentice Hall.

Ebel, R. L., & Frisbie, D. A. (2017). Essentials of Educational Measurement (7th ed.). Pearson.

El‑Hamamsy, L., Zapata‑Cáceres, M., Mondada, F., et al. (2023). The competent Computational Thinking test (cCTt): A valid, reliable and gender-fair test for longitudinal CT studies in grades 3–6. arXiv. https://arxiv.org/abs/2305.19526

Embretson, S. E., & Reise, S. P. (2000). Item response theory for psychologists. Erlbaum.

Embretson, S. E., & Reise, S. P. (2013). Item response theory for psychologists. Psychology Press.

Fan, X. (2013). Item response theory and classical test theory: An empirical comparison. Educational and Psychological Measurement, 63(3), 357–381.

Fan, Z., & Wang, L. (2018). Item difficulty distribution and test reliability: An empirical analysis. Applied Psychological Measurement, 42(7), 555–570.

Foster, R. C. (2020). A generalized framework for classical test theory. Journal of Mathematical Psychology, 96, 102330. https://doi.org/10.1016/j.jmp.2020.102330

Gardner, J. (2021). Artificial intelligence in educational assessment: Breakthrough or buncombe? Journal of Computer Assisted Learning, 37(5), 1305–1316.

Garzón, J. (2025). Artificial intelligence in education: Applications and trends (2015–2025). Education and Information Technologies, 30(2), 1551–1580.

Gierl, M. J., & Lai, H. (2018). Instructional and diagnostic classroom assessment. Educational Measurement: Issues and Practice, 37(1), 74–87.

Gierl, M. J., & Lai, H. (2018). The role of artificial intelligence in educational assessment. Computers and Education, 126, 314–324.

Grisay, A., & Monseur, C. (2007). Measuring the equivalence of item difficulty in the various versions of an international test. Studies in educational evaluation, 33(1), 69-86.

Guler, M. I. N. (2018). A comparison of difficulty indices calculated for open-ended items according to Classical Test Theory and Many-Facet Rasch Model. Eurasian Journal of Educational Research, 18(75), 99–114.

Haladyna, T. M. (2004). Developing and validating multiple-choice test items (3rd ed.). Lawrence Erlbaum.

Haladyna, T. M. (2018). Developing and Validating Multiple-Choice Test Items (4th ed.). Routledge.

Haladyna, T. M., & Rodriguez, M. C. (2013). Developing and validating test items (4th ed.). Routledge.

Hambleton, R. K. (2004). Theory and practice of IRT. International Journal of Testing, 4(1), 61–80.

Hambleton, R. K., & Jones, R. W. (1993). Comparison of classical test theory and item response theory. Educational Measurement: Issues and Practice, 12(3), 38–47.

Hambleton, R. K., Swaminathan, H., & Rogers, H. J. (1991). Fundamentals of item response theory. Sage.

Harpe, S. E. (2015). How to analyze Likert and other rating scale data. Currents in Pharmacy Teaching and Learning, 7(6), 836–850.

He, Q., & Von Davier, A. (2016). From computerized adaptive testing to automated scoring: Artificial intelligence in educational assessment. British Journal of Educational Technology, 48(3), 632–644.

He, Y., Wang, M., & Reckase, M. (2022). Optimizing test difficulty distribution for improved measurement precision. Journal of Educational Measurement, 59(2), 215–236.

Henson, R., & Douglas, J. (2020). Advances in computerized adaptive testing. Psychometrika, 85(3), 722–745.

Hsu, F. Y., Lee, H. M., Chang, T. H., & Sung, Y. T. (2018). Automated estimation of item difficulty for multiple-choice tests: An application of word embedding techniques. Information Processing & Management, 54(6), 969-984.

Huda, A., Firdaus, F., Irfan, D., et al. (2024). Optimizing educational assessment: The practicality of CAT with an IRT approach. International Journal on Informatics Visualization, 8(3), 200–210.

Huang, X., Li, D., & Shang, H. (2023). Global trends in AI-enhanced assessment: A bibliometric mapping of two decades of research. Journal of Learning Analytics, 10(1), 55–78.

Jabrayilov, R., Emons, W. H. M., & Sijtsma, K. (2016). A comparison of classical test theory and item response theory in individual change assessment. Applied Psychological Measurement, 40(8), 591–616. https://doi.org/10.1177/0146621616664046

Karabatsos, G. (2003). Comparing the aberrant response detection performance of thirty-six person-fit statistics. Applied Measurement in Education, 16(4), 277–298.

Karim, A., & Hidayat, M. (2022). Integrating item difficulty and discrimination indices for better test item quality decisions. Measurement and Evaluation in Education, 13(1), 45–59.

Kelley, K., & Preacher, K. J. (2012). On effect size. Psychological Methods, 17(2), 137–152.

Kim, H. (2016). Standard errors in test analysis: Implications for reliability. Applied Psychological Measurement, 40(7), 543–559.

Kim, S. (2017). Accuracy of a classical test theory–based procedure for estimating the reliability of a multistage test. ETS Research Report Series. https://doi.org/10.1002/ets2.12129

Kline, T. J. B. (2016). Psychological testing: A practical approach to design and evaluation. Sage.

Kohli, N., Koran, J., & Henn, L. (2014). Relationships among classical test theory and item response theory frameworks via factor analytic models. Educational and Psychological Measurement, 75(3), 389–405. https://doi.org/10.1177/0013164414559071

Koopmans, R. (2020). The changing landscape of assessment: Technology-enhanced approaches for future learning. Assessment & Evaluation in Higher Education, 45(6), 1–14.

Kpolovie, P. J. (2014). Statistical techniques for advanced research. Springfield Publishers.

Kundrát, J., et al. (2022). Assessing the attitudes of students toward school subjects with the semantic differential and interactive visual metaphors. Psychologie a její kontexty, 13(2), 63–79.

Lai, C. L., & Hwang, G. J. (2016). A self-regulated flipped classroom approach. Educational Technology & Society, 19(2), 134–146.

Lai, E., Wolfe, R., & Lin, P. (2022). Item difficulty extremes and their impact on test reliability: A simulation study. Assessment, 27(1), 1–18.

Lai, H., & Diao, Q. (2020). Predicting item difficulty using natural language processing. Learning and Instruction, 65, 101–114.

Lalor, J. P., Wu, H., & Yu, H. (2016). Building an evaluation scale using Item Response Theory. arXiv. https://arxiv.org/abs/1605.08889

Lan, A., Scarlatos, A., et al. (2025). SMART: Simulated students aligned with IRT for question difficulty prediction. arXiv preprint.

Levy-Feldman, I. (2025). The role of assessment in improving education and learning outcomes. Education Sciences, 15(1), 50.

Li, X., & Lissitz, R. W. (2019). The complexity of large-scale educational assessment systems. Educational Measurement: Issues and Practice, 38(3), 23–33.

Linacre, J. M. (2020). Rasch measurement theory: The cornerstone of modern assessment. MESA Press.

Linden, W. J. van der, & Glas, C. A. W. (2010). Computerized adaptive testing: Theory and practice. Springer.

Liu, H., Liu, Z., & Chen, Y. (2022). Mapping global research on educational assessment using VOSviewer: A bibliometric review. Scientometrics, 127, 2283–2310.

Mahjabeen, W., & Razzaq, M. (2017). Difficulty index, discrimination index and distractor efficiency in multiple choice questions. Annals of PIMS‑Shaheed Zulfiqar Ali Bhutto Medical University, 13(4), 310–315.

Margono, G. (2015). Multidimensional reliability of instrument for measuring students’ attitudes toward statistics by using semantic differential scale. American Journal of Educational Research, 3(1), 49–53. https://doi.org/10.12691/education-3-1-10

Martínez, R. (2020). Designing summative assessments for higher-order thinking skills. Assessment in Education, 27(4), 389–405.

Masters, G. N. (1982). A Rasch model for partial credit scoring. Psychometrika, 47(2), 149–174.

McClelland, M. M., et al. (2015). Predicting academic achievement and grade retention with attention and behavioral regulation. Developmental Psychology, 51(4), 446–461.

Mertens, D. M. (2018). Research and evaluation in education and psychology. SAGE Publications.

Metsämuuronen, J. (2023). Seeking the real item difficulty: Bias-corrected item difficulty and some consequences in Rasch and IRT modeling. Behaviormetrika, 50(1), 121–154.

Mislevy, R. J., et al. (2003). On the structure of educational assessment. Measurement: Interdisciplinary Research and Perspectives, 1(1), 3–62.

Mulyasa, E. (2018). Pengembangan dan implementasi Kurikulum 2013. Remaja Rosdakarya.

Muraki, E. (1992). A generalized partial credit model. Applied Psychological Measurement, 16(2), 159–176.

Olson, A., & Fremer, J. (2021). Test fairness and item difficulty: Contemporary perspectives. Educational Measurement: Issues and Practice, 40(3), 12–25.

Osgood, C. E., Suci, G. J., & Tannenbaum, P. H. (1957). The measurement of meaning. University of Illinois Press.

Osterlind, S. J. (2010). Constructing test items. Springer.

Panadero, E., & Broadbent, J. (2018). Developing evaluative judgement. Assessment & Evaluation in Higher Education, 43(6), 881–891.

Pellegrino, J. W., & Hilton, M. (2012). Education for life and work: Developing transferable knowledge and skills in the 21st century. National Academies Press.

Penfield, R. D., & Camilli, G. (2007). Differential item functioning and bias detection. Sage.

Petrillo, J., et al. (2015). Using classical test theory, item response theory, and Rasch measurement theory to evaluate patient-reported outcome measures. Value in Health, 18(1), 25–34. https://doi.org/10.1016/j.jval.2014.10.015

Prabowo, A., Herman, T., & Fatimah, S. (2020). The characteristics of four‑tier diagnostic test: Classical test theory perspective. International Journal on Emerging Mathematics Education, 7(1), 1–12. https://doi.org/10.12928/ijeme.v7i1.22677

Rahmawati, F. (2020). Analisis kesulitan pemecahan masalah pada materi perbandingan berdasarkan ranah kognitif taksonomi Bloom revisi. Universitas Pendidikan Indonesia.

Ramesh, A., et al. (2021). A systematic literature review on automated essay scoring systems. Journal of Educational Computing Research, 59(7), 1273–1301.

Razavi, P., & Powers, S. J. (2025). Estimating item difficulty using large language models and tree-based machine learning algorithms. arXiv. https://doi.org/10.48550/arXiv.2504.08804

Reckase, M. D. (1985). The difficulty of test items that measure more than one ability. Applied psychological measurement, 9(4), 401-412.

Rukli, R., Ma’rup, M., Bahar, E. E., & Ramdani, R. (2021). The estimation of test item difficulty using focus group discussion approach on the semantic differential scale. Kasetsart Journal of Social Sciences, 42(3), 599–606. https://doi.org/10.34044/j.kjss.2021.42.3.22

Rukli, R., Ma’rup, M. (2021). Assessment of the difficulty of mathematics high-level reasoning using focus group discussion and program item approaches. Daya Matematis: Jurnal Inovasi Pendidikan Matematika, 9(2), 79–90. https://doi.org/10.26858/jdm.v9i2.23577

Rush, B. R., Rankin, D. C., & White, B. J. (2016). The impact of item-writing flaws and item complexity on examination item difficulty and discrimination value. BMC medical education, 16, 1-10.

Saranraj, L. (2023). A comparative study on the effect of difficulty index and discrimination index in formative assessment (MCQs) in technical English course. Journal of English Language Teaching, 65(1), 23–30. https://doi.org/10.4324/9781315798462

Scarlatos, A., Fernandez, N., Ormerod, C., et al. (2025). SMART: Simulated students aligned with IRT for question difficulty prediction. arXiv. https://arxiv.org/abs/2507.05129

Shepard, L. A. (2019). Classroom assessment to support teaching and learning. The ANNALS of the American Academy of Political and Social Science, 683(1), 183–200. https://doi.org/10.1177/0002716219843818

Si, L., Wu, H., & Wu, J. (2020). Mapping research on big data and education: A bibliometric review. Education and Information Technologies, 25(5), 1–26.

Siemens, G., & Long, P. (2011). Penilaian berbasis analitik: Masa depan evaluasi dalam pendidikan tinggi. Educause Review, 46(1), 30–40.

Sireci, S. G. (2016). On the validity of multistage testing for state assessments. Educational Measurement: Issues and Practice, 35(3), 24–27. https://doi.org/10.1111/emip.12107

Sireci, S. G. (2020). Validity in the 21st century: How measurement theory must adapt to new forms of assessment. Educational Measurement: Issues and Practice, 39(3), 5–18.

Stokkink, P. (2025). The impact of AI on educational assessment. arXiv Preprint.

Tokarev, A., & Shildibekov, Y. (2025). Classical test theory in psychometrics explained. Psyculator. (Updated May 7, 2025).

Van Eck, N. J., & Waltman, L. (2017). Citation-based clustering of publications using VOSviewer. Scientometrics, 111(2), 1053–1070.

Vlachopoulos, D. (2024). A systematic literature review on authentic assessment in higher education and 21st-century skills. Studies in Higher Education, 49(2), 301–318.

Wang, S., et al. (2024). Artificial intelligence in education: A systematic bibliometric and content review. Information Processing & Management, 61(3), 103–120.

Yang, A. C., et al. (2022). Adaptive formative assessment systems based on computerized adaptive testing. Computers & Education Reports, 10, 100–120.

Zawacki-Richter, O., Marín, V., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence in higher education. International Journal of Educational Technology in Higher Education, 16(1), 39.

No. 054/SSL/2023

Penerbit AGMA by:

Editorial Officer

Jl. Dirgantara, Kel. Mangalli, Kec. Pallangga, Kab. Gowa, Sulawesi Selatan. 92161.

Telp: (0411) 8201421, HP/WA: 081355428007.

e-mail: redaksi@penerbitagma.my.id, website: book.penerbitagma.my.id/index.php/agma

Penerbit AGMA is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.